An essay inspired by the book What to Think About Machines that Think, edited by John Brockman

Machines that think are already among us. They’re also so far out in the future that they might never arrive.

Machines that think are already among us. They’re also so far out in the future that they might never arrive.

Machines that think give us reason for great hope. They also should cause us great concern.

We might be thinking in entirely the wrong way about machines that think.

They’re with us

It’s quite possible that what we generally think of as machines that think—artificially intelligent machines created by humans and capable of the same kinds of higher-level, deep thought as humans—might never come into being. I’m not saying they won’t; but there’s enough debate in the scientific and tech communities, among people who know a lot more about the subject than I do, to make my speculation on the matter pointless.

[tweetthis]Science, tech, ethics – considering the ramifications of our quest for artificial intelligence #tech[/tweetthis]What it makes a great deal more sense to me to consider is the use of machine technology that already enhances and prolongs human life. I’m not just referring to the Internet and smartphones and self-driving cars and all of the other tech devices that are separate from us, that are other. I’m talking about a somewhat grayer area: machine-enhanced people.

We’re not talking about superheroes

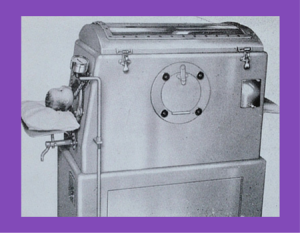

If you’re of a certain age—meaing, old—the names Steve Austin and Jaime Sommers mght ring a bell. They were, in the pretend land that is television, the Six Million Dollar Man and the Bionic Woman, respectively: people enhanced by technology to have superhuman powers. Human, but other. We might not exactly have the Six Million Dollar Man and Bionic Woman living among us, but we do have Oscar Pistorius and others with truly amazing artificial limbs, some of which can be controlled through brain waves. We have neural implants that help patients with Parkinson’s Disease and other conditions. We’ve used iron lungs since the early 1900s, and by 2002 there were 3 million people worldwide living with pacemakers.

The lines have blurred. In a sense, we already have thinking machines at work in the world; they are us.

The lines have blurred. In a sense, we already have thinking machines at work in the world; they are us.

We have enhanced ourselves with machine technology. And we will continue to do so. Rather than be afraid of what thinking machines might do to humanity, I’m amazed and thrilled by what they already do for humanity.

But is there a gray area here? With all of the ways in which science and technology already enhance human life, is it possible that we might be blinded to an area in which machine technology or artificial intelligence could pose a threat? It’s possible. And that’s cause for concern—especially in a country where we have established a legal precedent that non-human entities (corporations) deserve the same legal rights as people. Have we considered, for example, whether that legal precedent could be extended to machines or artificial intelligence systems, or whether that’s a good idea? We have “intelligent” networks already that track our movements on the internet and can predict and recommend websites and pages we might want to visit in the future. Will we at some point extend civil rights to these networks or the organizations that operate them? I, for one, think that we should not; but the U.S. approach to corporate speech gives me pause to worry that we might.

We have only ourselves to fear

More than one author in the book What to Think About Machines that Think, a compilation of essays edited by John Brockman, expresses the concern that it is not the machines we are creating that should be feared, but our own willingness to cede control and decision-making to them. I have to agree. It seems much less likely to me that we will create evil machines that are both capable of and committed to controlling people, than that people will happily cede control of their lives to any entity willing to take it. I’m less worried about a robot take-over than a corporate take-over.

More than one author in the book What to Think About Machines that Think, a compilation of essays edited by John Brockman, expresses the concern that it is not the machines we are creating that should be feared, but our own willingness to cede control and decision-making to them. I have to agree. It seems much less likely to me that we will create evil machines that are both capable of and committed to controlling people, than that people will happily cede control of their lives to any entity willing to take it. I’m less worried about a robot take-over than a corporate take-over.

John C. Mather predicts in It’s Going to Be a Wild Ride, one of the book’s essays, that we will, in fact, develop general artificial intelligence, “and it will be in the service of the usual powerful forces: business, entertainment, medicine, international security and warfare, the quest for power at all levels, crime, transportation, mining, manufacturing, shopping, sex, anything you like. I don’t think we’re all going to like the results. … I don’t know who’ll be smart enough to keep the genie under control—because it’s not just machines we might need to control, it’s the unlimited opportunity (and payoff) for human-directed mischief.”

Indeed. Now there’s something to fear.

Unthinking humans

I’m also concerned about our willingness to [tweetthis]Trust machines…but always equip yourself with the knowledge needed to function without them. #AI[/tweetthis]. GPS is great, but let’s not stop learning to read and understand maps. Calculators save us enormous amounts of times, and mathematical calculation is a job that’s perfectly suited to machines. But I don’t believe we should stop teaching calculation skills to our children. They might not need to add, subtract, multiply and divide in their everyday work lives when they reach adulthood, but they darned well need to know how to do those calculations—if only to check the work of a machine or to continue functioning in society when electronic systems break down. And who’s to say what other skills might suffer if we don’t teach our brains to calculate? Learning cursive writing stimulates brain development in ways that far outweigh the value of actually writing in cursive; learning basic math probably has similar benefits on brain development.

“We have little to fear from thinking machines and more to fear from the increasingly unthinking humans who use them.”

~W. Tecumseh Fitch, Nano-Intentionality

Heaven help us if we don’t grow and exercise and nurture the critical thinking skills that set us apart from machines. We need them to grow as human beings and as a culture, and to protect ourselves from the powerful entities that are most likely to control artificial intelligence systems.

We also need them to ponder the ethics of the world we are creating, to think through the new challenges posed by technology and our use of it. As neuroscientist Sam Harries writes in Can We Avoid a Digital Apocalypse?, “We seem to be in the process of building a god. Now would be a good time to wonder whether it will (or even can) be a good one.”

The good news is that there are people thinking about these questions. Smart people. The bad news is that intelligent, thoughtful ideas are often drowned out or ignored in this society. We are not always a thinking people; witness the rise of presidential candidates who tout their very lack of credentials as evidence of their fitness for one of the most powerful jobs in the world.

Pay attention, world. Don’t let laziness—physical, intellectual or emotional—distract you from the important issues we must confront.

Wow, well done. I just wanted you to know that I’m halfway through and have a friend i need to send a link to as this is in line with her thinking. Meanwhile I’ll now scroll back up after posting.